As AI became embedded across enterprise tools, security teams began expecting their most manual workflows to benefit from it too. Third Party Risk Management (TPRM) was an ideal opportunity— time-consuming, repetitive, and nearly impossible to standardize across a growing vendor portfolio.

The TPRM team had done discovery on a new criteria-based assessment model, where organizations define the security requirements (criteria) vendors must meet rather than relying only on vendor-provided answers. The concept had been validated with a handful of customers. We, the AI team, partnering with the TPRM team, used the agent as the first implementation of that model — designing a workflow that could evaluate vendor documentation against those criteria and produce structured assessment results.

This was a 0-to-1 initiative: Drata's first agent-driven experience, with no internal patterns to follow, no prior interaction models to reference. The design challenge wasn't just "how should this feature work" — it was "what does AI-assisted work look like in Drata".

What made this different from a standard feature launch: the agent was conceived as the foundation for a future standalone TPRM product, planned for August 2026. The design work here wasn't scoped to a single workflow — it had to be architected to carry a full product.

I led the design of Drata's first AI-assisted workflow, establishing the initial interaction model for agent-driven experiences within the product.

- Established the initial patterns for how AI integrates into product workflows — partnering with the AI Director to translate early agent concepts into concrete interaction models, including explainability, autonomy, and communication patterns.

- Designed a new class of workflow — an end-to-end agent experience embedded within vendor risk reviews, transforming a manual, document-heavy process into an AI-assisted system while preserving user control and trust.

- De-risked a 0→1 product direction — Partnering with the AI PM, I led 5 moderated sessions to validate the concept, followed by 3 months of biweekly sessions with enterprise design partners, led by both the TPRM and the AI team.

- Enabled a new product surface — The TPRM Agent serves as the foundation for a standalone TPRM product (roadmapped for August 2026), requiring design decisions that scale beyond a single feature.

Before defining the experience, we needed to answer a foundational question: what role should AI play in this workflow, and how much autonomy should it have?

We landed on a deliberate position: an AI agent that does the analysis, shows its work, and preserves human judgment at every meaningful decision point.

This was the right model for the context. Third Party Risk Management involves high-stakes decisions that professionals must defend to leadership and auditors. As a result, users need to stay in the loop — reviewing AI outputs, signing off on decisions, and controlling how much autonomy the system has. This framing — AI as an assistive layer, not a replacement — became the organizing principle for every interaction decision that followed.

Review flows — existing workflow with and without the agent layer

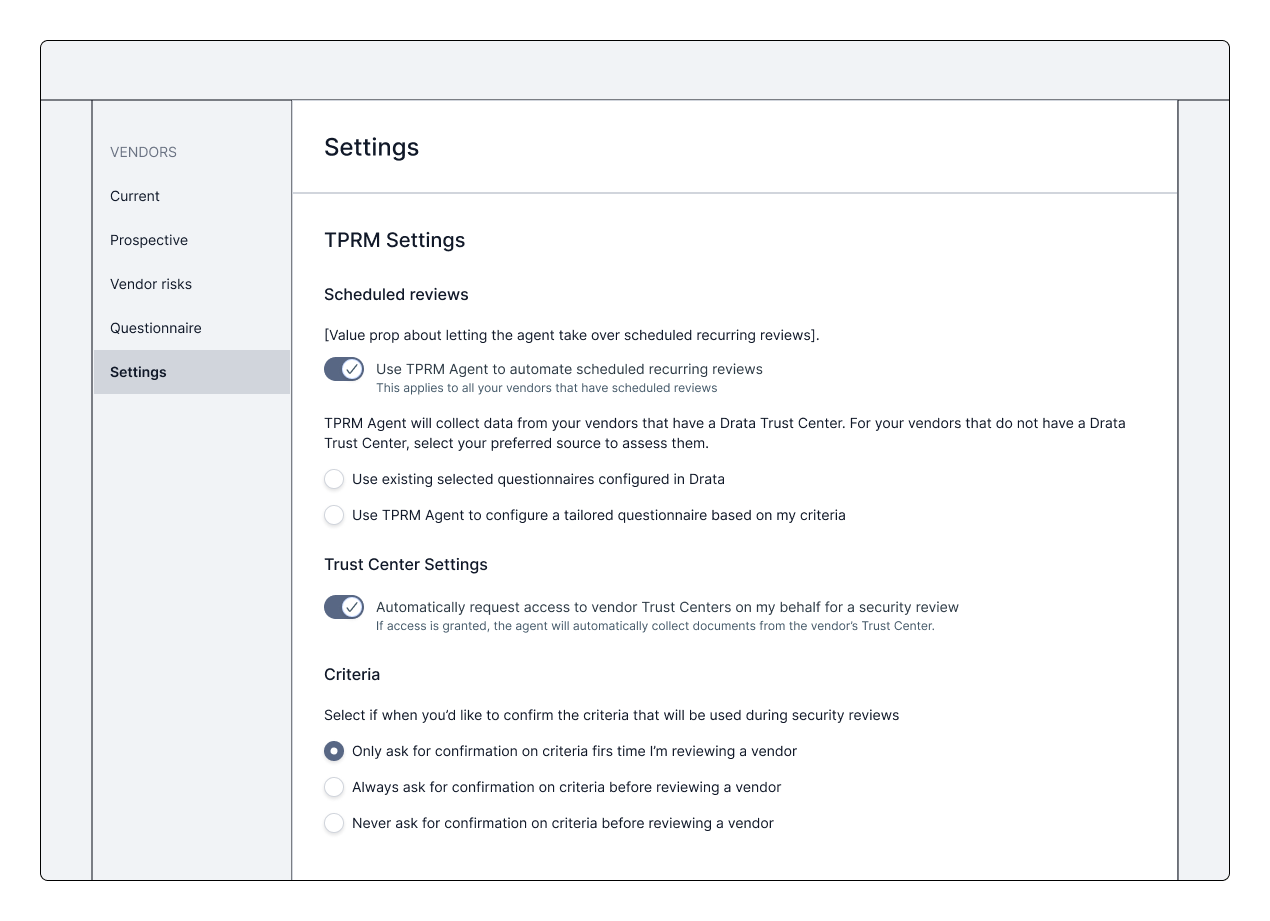

Organizations can tune how much responsibility the agent takes on within the workflow.

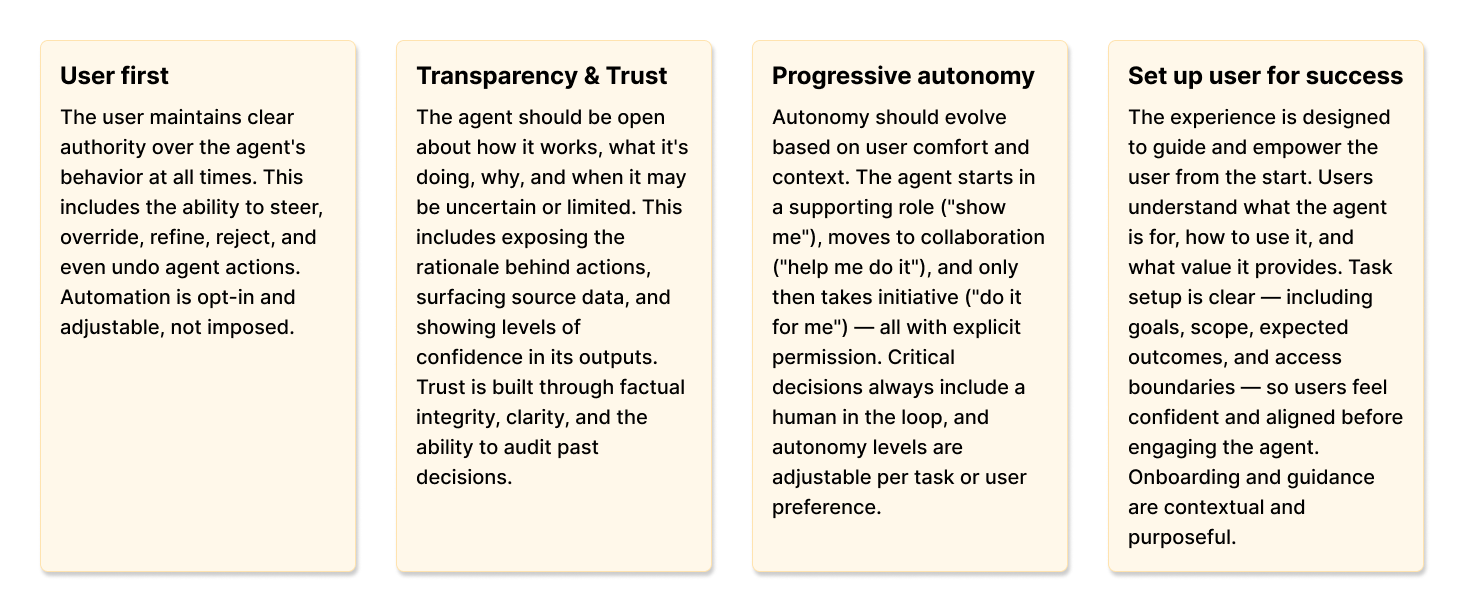

As part of this initiative, I partnered with the AI Director, AI PM, and TPRM designer to define Drata's AI design principles and personality model (levels of presence and autonomy) from the ground up. We synthesized themes from working sessions into a cohesive framework, which I later refined and translated into final language. These principles now guide AI feature development across the platform.

AI Design principles

These are the 4 design principles, out of 6, that were most important for this project

AI design principles — synthesized from cross-team working sessions, now applied across all AI features at Drata

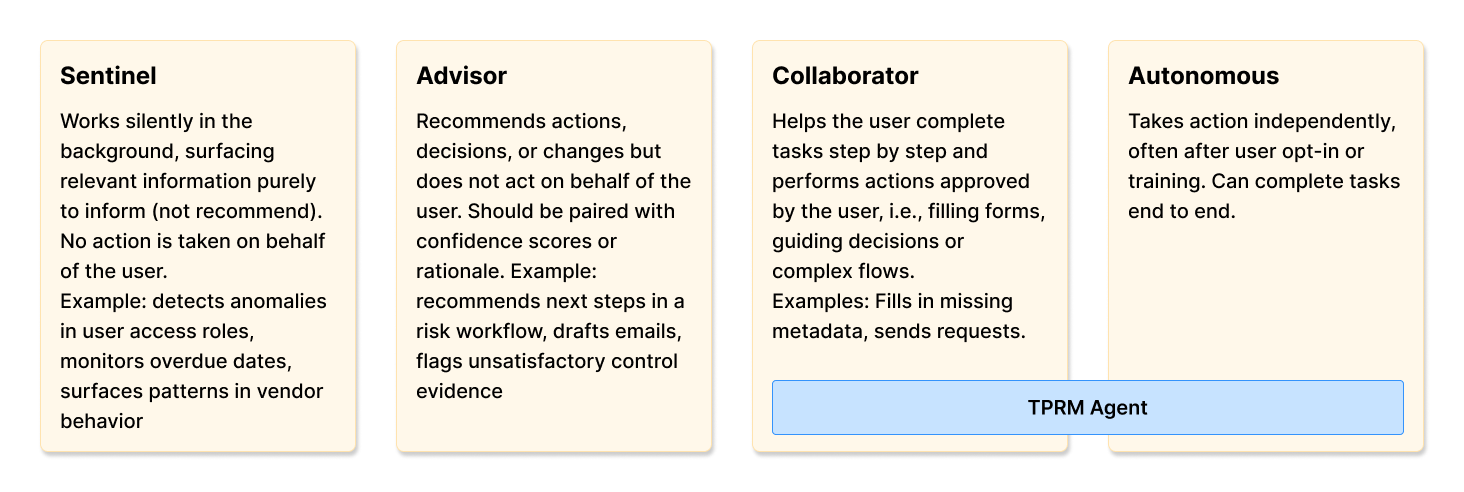

Personalities

TPRM Agent operates primarily at the Collaborator level — helping users complete tasks step by step with approval required at key moments. For vendors with a Trust Center in Drata, limited Autonomous behavior is permitted through configurable autonomy settings: users can give the agent permission to request access to a vendor's Trust Center, collect documents automatically, and run the assessment.

Four autonomy levels — the TPRM Agent operates at Collaborator, with limited Autonomous behavior for Trust Center vendors

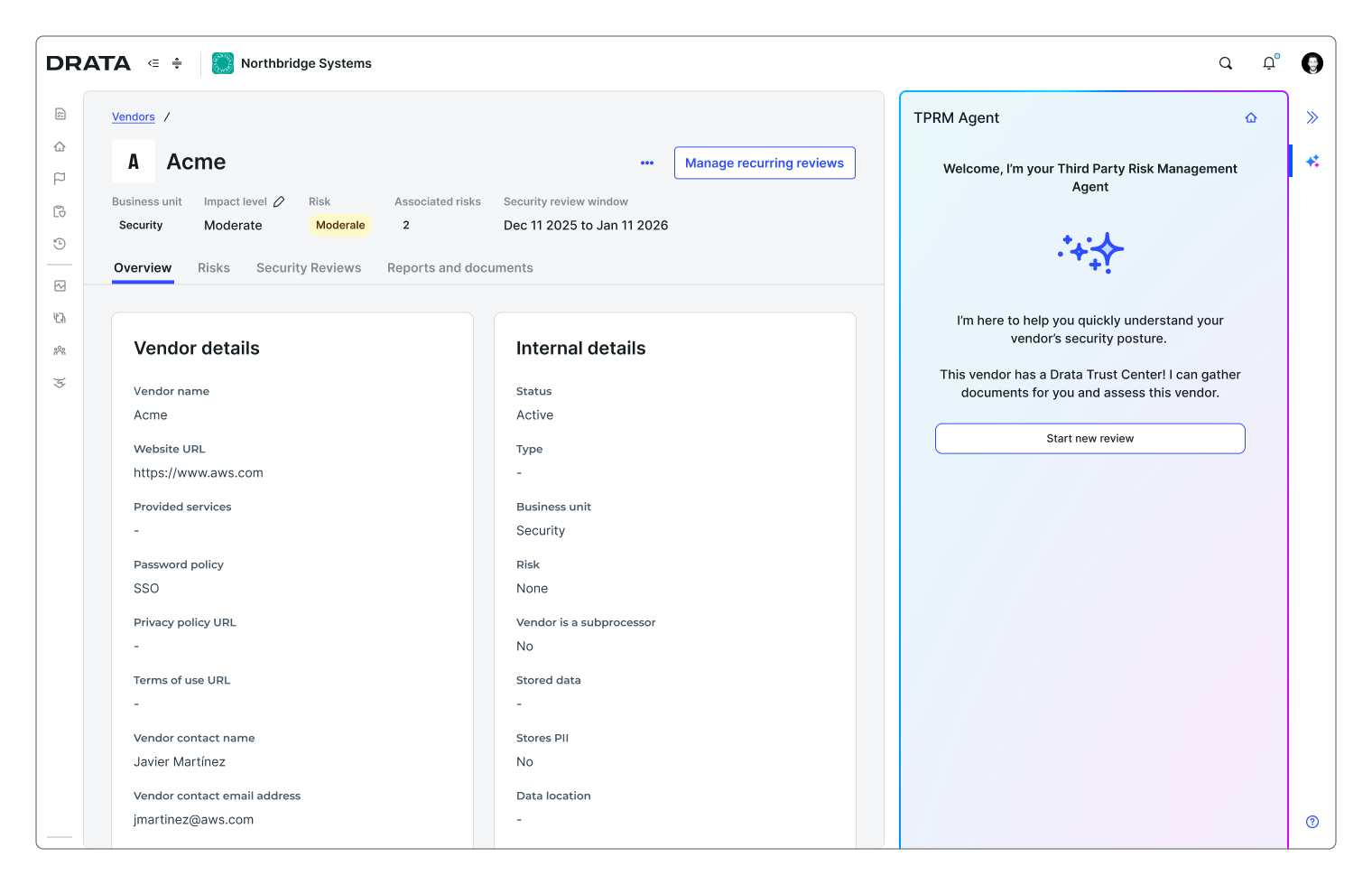

The core structural decision was to introduce a dedicated AI surface on top of the existing vendor review workflow, rather than replace the manual process altogether. This gave users a clear place to interact with the agent while preserving the familiar underlying workflow. Teams with stricter policies around AI could still rely on the manual path, while others could adopt AI assistance progressively.

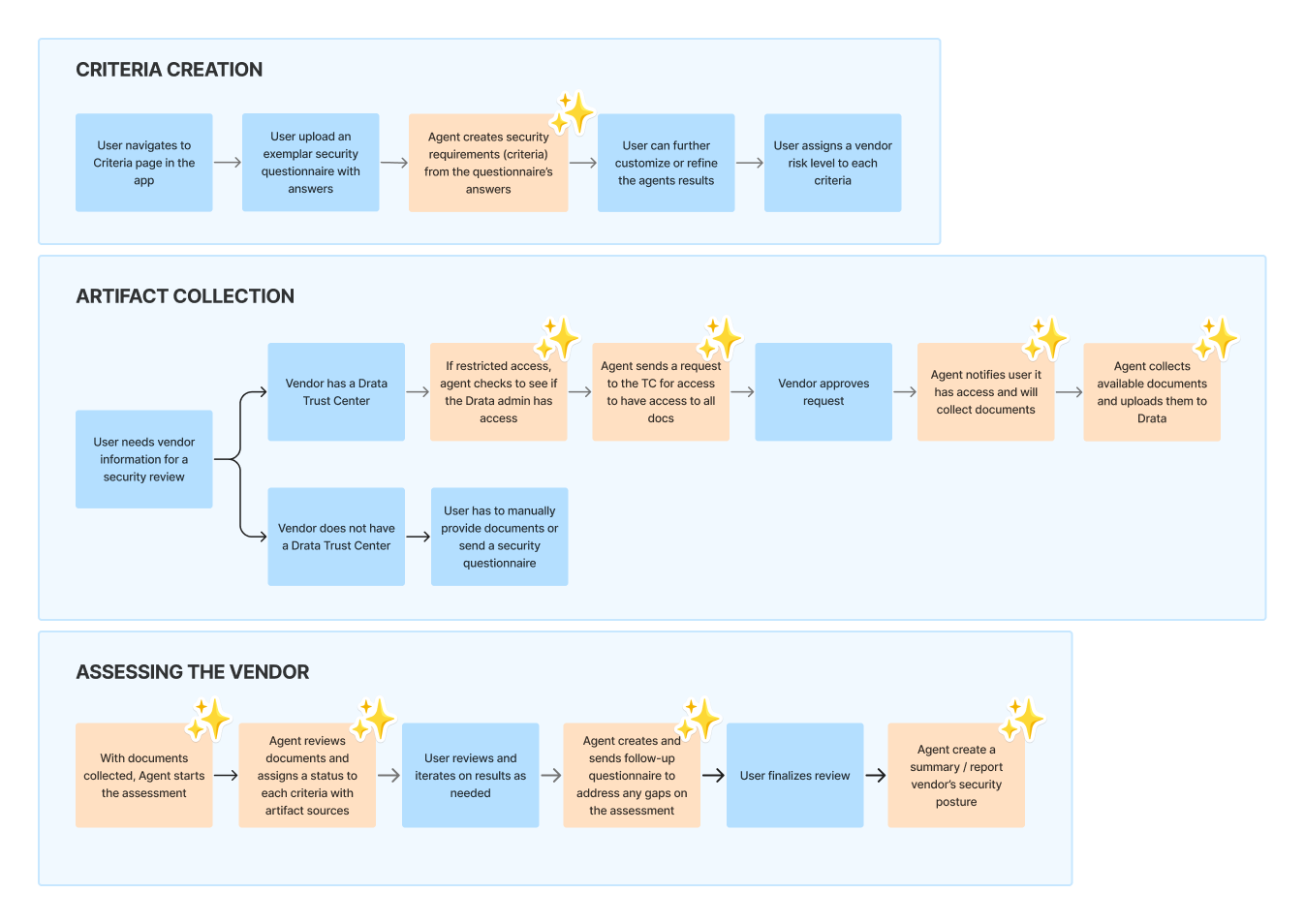

The workflow runs in five stages:

Users upload an existing questionnaire; the system parses it into structured evaluation criteria. Organizations keep their mental model — the agent makes it machine-readable.

Criteria creation — parsing a vendor questionnaire into structured evaluation criteria

An initiation surface within the vendor profile allows users to start a review when needed, without disrupting the default workflow.

Agent entry point — accessible within the vendor profile, without disrupting the default workflow

The agent processes vendor documentation. For vendors using Drata's Trust Centers, documentation can be retrieved automatically, removing one of the most manual steps in the process. The experience was designed to support future expansion to additional Trust Center providers.

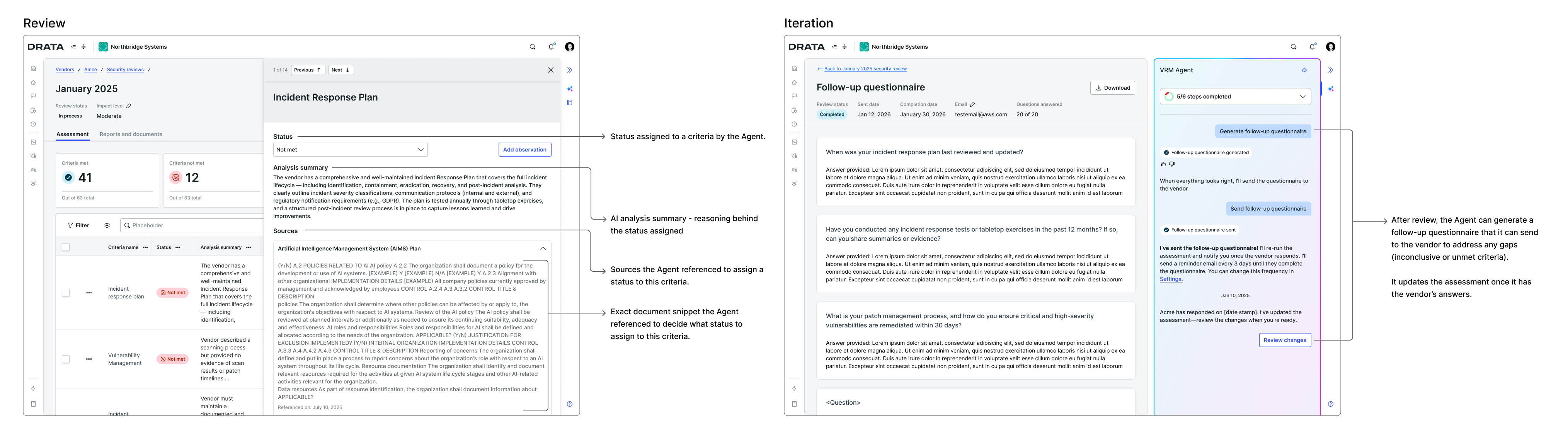

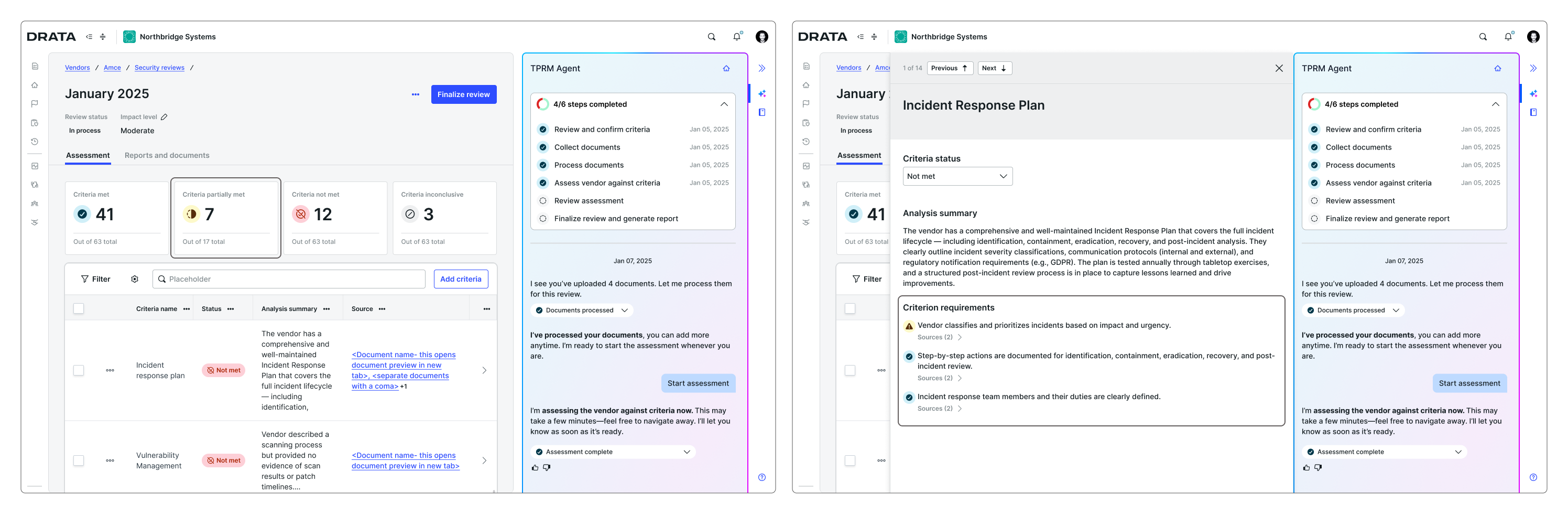

The agent evaluates documentation against each criterion and returns: Met, Not Met, or Inconclusive — with supporting explanations and evidence references.

Guided experience — high user involvement, specially for vendors without a Drata Trust Center

Autonomous experience for vendors with a Drata Trust Center

Users review the agent's outputs, adjust results where needed, and validate the assessment before proceeding. For criteria marked as Not Met or Inconclusive, the agent generates a follow-up questionnaire to request additional information from the vendor. Once responses are received, the agent re-runs the assessment and highlights any changes, allowing users to iteratively refine the evaluation.

Review and iteration — users validate results and can trigger re-assessment after additional vendor responses

Observations can be flagged as potential risks. The agent generates a structured report — executive summary, criteria results, evidence references, observations flagged as risks — exportable as PDF.

Risk creation and reporting — flagging observations as risks and generating the assessment report

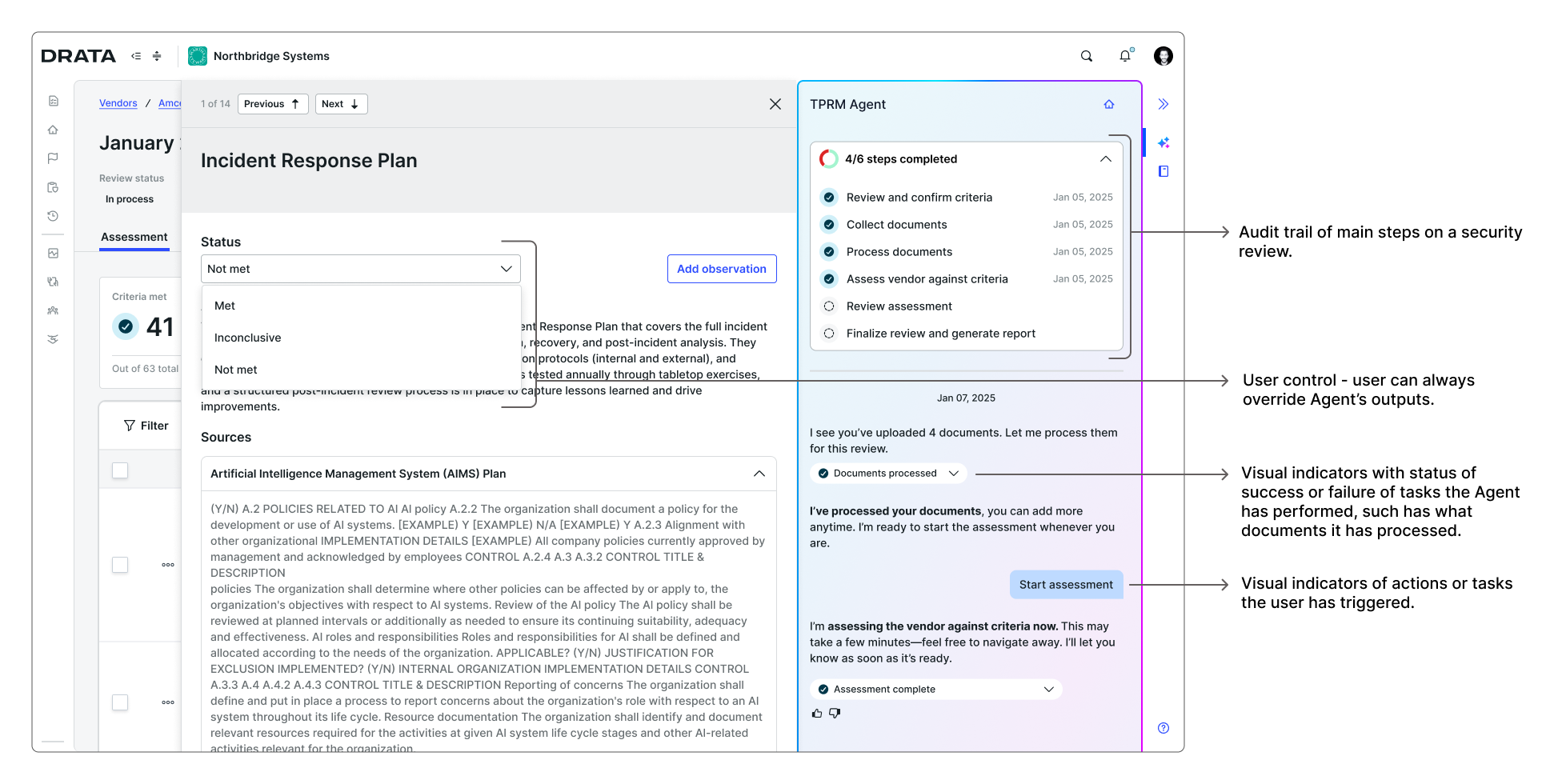

Transparency and system behaviors

Beyond the core workflow, we needed to define how the system behaves — ensuring transparency, auditability, and user control across every step.

Every step in the assessment process is recorded, including how criteria were generated, what evidence was analyzed, and how results evolved over time. This ensures decisions can be reviewed, audited, and defended when needed.

Users can inspect not just the final assessment, but the reasoning behind it — including requirement-level status and supporting evidence.

AI-generated results can be reviewed, adjusted, or overridden at any point, ensuring human judgment remains the final authority.

Transparency and system behaviors — audit trail, explainability, and user override controls

These behaviors were designed as foundational to the interaction model — not as secondary features — ensuring the agent supports decision-making without removing accountability.

The research process was structured in two phases. The goal of the first phase was to understand whether this workflow could be trusted and adopted in a real vendor review process. The goal of the second phase was to calibrate our product.

Phase 1: Concept validation (5 moderated sessions)

Partnering with the AI PM, I ran 5 moderated sessions with to test whether the criteria concept aligned with their mental model, define which documents they gathered most frequently (we would use this to test our AI model), and define what would take them to trust our agent's outputs.

Participants worked through interactive prototypes, completing tasks such as initiating a review, uploading documentation, and evaluating AI-generated results.

The sessions confirmed the criteria concept was sound and surfaced the core condition for adoption: users were open to AI-assisted assessments if the system made its reasoning visible and kept them in control of final decisions.

Phase 2: Design partner refinement (6 months, biweekly sessions)

Starting in mid-December 2025, six enterprise design partner organizations joined biweekly sessions and tested the agent using their own vendor criteria and real documentation — not simulated scenarios.

This phase was less about validating the concept and more about calibrating the experience for real-world use. Design partners helped us refine three critical parts of the model:

- Criteria calibration — We refined how the system interpreted and evaluated criteria so the model better matched how teams assess vendor evidence in practice.

- Assessment states — In addition to Met, Not Met, and Inconclusive, we introduced Partially Met to better reflect how users reason about incomplete or mixed evidence.

- Deeper explainability — We surfaced the status of each underlying requirement within a criterion, so users could see exactly which requirement was met, not met, or inconclusive — rather than only seeing the final criterion status.

These refinements made the agent's outputs more legible, more defensible, and more aligned with how security teams actually make decisions.

Each result surfaces the AI's reasoning — because users need to defend assessments to leadership. Any AI-generated result can be overridden — human judgment always has final authority.

Guided experience vs. conversational AI

We chose to design a guided, structured workflow rather than an open-ended conversational experience.

This was partly a resource constraint — building a robust conversational system would have required significantly more technical investment — but more importantly, it was a strategic decision.

This was a new interaction model for our users. Introducing AI into a high-stakes workflow meant we needed to reduce ambiguity, not increase it. A conversational interface would have created too much variability in how users interacted with the system, making outcomes harder to predict, debug, and trust.

The guided experience allowed us to:

- Deliver value faster and get the product into customers' hands early

- Constrain the experience to clear, repeatable workflows

- Set users up for success by making expectations and system behavior explicit

At the same time, we designed this as a stepping stone toward a more flexible, conversational experience. The interaction model, patterns, and system behaviors established here were intentionally structured to support that evolution — allowing us to learn from real usage before introducing more open-ended interactions.

- Strong validation from enterprise design partners — All 6 Agent design partners were converted into TPRM Standalone design partners, indicating those design partners are also being used to shape the broader TPRM platform

-

Positive qualitative feedback and adoption signals — Design partners highlighted that the agent aligns closely with how their teams already operate, with feedback reinforcing its value in reducing manual effort and improving consistency

"Third-party risk is one of the most pressing challenges for every CISO. Drata's Agentic TPRM Assessment will fundamentally change how organizations operationalize third-party risk management — bringing rigor, consistency, and scale. Using Agentic AI, security teams can run assessments in minutes, achieve a more accurate risk posture across the supply chain, and operate at AI speed." — CISO, Enterprise customer

- Projected efficiency gains of ~75% — Based on testing with design partners, vendor assessments that typically take days can be completed in hours through automated evidence analysis and structured evaluation

This project gave me the opportunity to put into practice many of the concepts I had been exploring around AI, while working on Drata’s first AI-assisted, agent-driven experience.

A key focus throughout the project was defining what users needed in order to trust the TPRM Agent to ensure users felt confident that the system could support a high-stakes, manual process and produce reliable, high-quality assessments. Trust became a central design constraint, shaping decisions around explainability, control, and transparency.

Working closely with design partners was especially valuable. Their willingness to engage, provide feedback, and test the agent in a controlled environment gave us direct visibility into how TPRM workflows actually operate. This made it easier to identify gaps, refine the experience, and validate whether our approach held up in real scenarios.

This project also highlighted the importance of strong cross-team collaboration. Two independent teams — AI and TPRM — were building this experience together. Early on, we had to establish clearer ownership, align on goals, and improve how we communicated changes. As we did, the collaboration became more effective and ultimately strengthened the outcome.

Another important takeaway was how critical it was to embed the agent within existing workflows. We intentionally designed AI as a layer on top of users’ current mental models, rather than replacing them. This made the experience feel more approachable and reduced the perceived risk of adopting AI in a high-stakes process.

The scope of the work also pushed me to think beyond the immediate feature. This agent is the first step toward a standalone product, and the first agentic experience at Drata. That meant the patterns we defined needed to scale — not just for this feature, but for future AI work across the platform. It shifted how I evaluated decisions, focusing not only on what worked today, but on what would hold as the system expanded.

I continue to apply these learnings in my work on AI-first features, especially in finding the right balance between system capability and user trust in high-risk workflows.